京都大学 情報学研究科 通信情報システム専攻 2020年8月実施 専門基礎A [A-5]

Author

Description

Answer all the following questions.

(1)

(a) Find the value of the entropy of

(b) Consider the

(c) Compare the entropy in Question (a) and the expected codeword length per symbol in Question (b). Explain which should be larger and the reason.

(d) Explain whether the expected codeword length per symbol in Question (b) increases or decreases as

(e) An information source

(f) Find the stationary distribution of

(g) Find the value of the entropy of

(2)

Answer the following questions related to channel coding. Let

(a) Determine whether

(b) Find the codeword polynomial for the message polynomial

(c) Find the minimum distance of

(d) Find the maximum number of error bits corrected by

(e) Consider communications with

(f) Explain the channel coding theorem.

Kai

(1)

(a)

(b)

The symbol probabilities are:

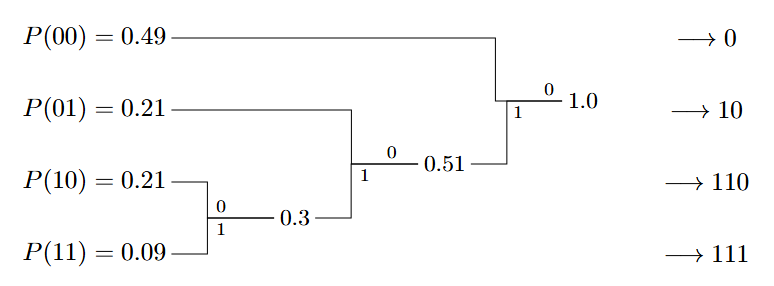

Construct Huffman Code:

Average Code Length (

Average Code Length per Symbol (

(c)

According to Shannon's source coding theorem, the entropy of a source provides the ultimate lower bound on the average length of any lossless code for that source.

The source

(d)

The expected code length per symbol decreases as block length

As

(e)

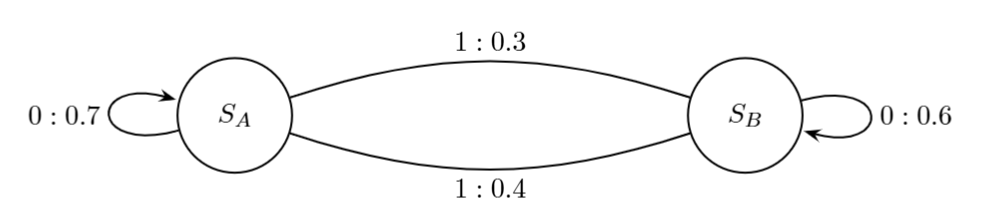

(f)

Let the stationary probabilities be

(g)

Substitute into

(2)

(a)

A polynomial is a codeword if it is divisible by the generator polynomial

So this is a codeword polynomial.

(b)

The systematic form is given by

- Shift:

- Modulo:

- So remainder

- So remainder

- Codeword:

(c)

For

- Since

- Minimum distance

(d)

The maximum number of correctable error bits is 1.

(e)

Probability of error occurring in a codeword (assuming correction of 1 bit):

(This represents the probability of having 2 or more errors).

(f)

- Achievability: For any data transmission rate

- Converse: If